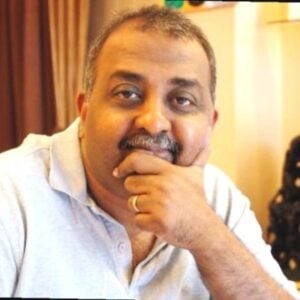

In an era where Artificial Intelligence is rapidly redrawing the boundaries of software engineering, leaders are tasked with balancing unprecedented speed with uncompromising quality. Srikant Krishnan, a key visionary at dMACQ Software Prt Ltd., offers a grounded perspective on this transition. In a quick chat, Krishnan discusses why the “death of coding” is a myth and also how dMACQ integrates AI into core enterprise workflows, and why human judgment remains the ultimate, irreplaceable anchor in an increasingly automated world.

Q1. Do you believe AI will eventually make manual coding a thing of the past?

AI will dramatically reduce routine and repetitive coding, but it won’t eliminate human engineering. What disappears is not engineering, but mechanical effort. We will still need people to define requirements, make architectural trade-offs, reason about edge cases, and take accountability for reliability, security, and risk.

Coding is already shifting from “typing syntax” to “designing, validating, and operating systems.” Engineers will spend more time on system thinking, correctness, and long-term maintainability, while AI handles scaffolding and boilerplate. In that sense, AI elevates the role rather than replaces it.

Q2. How do you ensure software quality when AI-generated code is harder to peer-review than human code?

We treat AI-generated code exactly like any external or third-party contribution. It must pass the same automated test suites, security scans, dependency checks, and quality gates before it can be merged. There are no shortcuts.

What does change is how we review. Instead of focusing on syntax or formatting, reviews shift toward architecture, interfaces, edge cases, data flows, and failure modes. We also maintain traceability—what prompt was used, what changed, and why—so decisions are auditable. In practice, strong automation actually makes quality assurance easier, not harder. AI increases speed, but discipline and guardrails determine quality.

Q3. With AI handling the basics, how should we change the way we train junior developers?

Training has to move up the value stack. Junior developers must be strong in fundamentals—data structures, debugging, security principles, testing, and performance—because those are the skills needed to validate AI output.

They should also learn how to work with AI responsibly: writing precise specifications, breaking work into small verifiable units, and validating results through tests, logs, and monitoring. The goal is not to create faster typists, but engineers who can reason, question, and take ownership. AI is a multiplier, but only for people who understand what “correct” looks like.

Q4. Does AI-driven development make software more secure, or does it create new types of vulnerabilities?

It does both. AI can significantly improve security by catching common vulnerabilities early, expanding test coverage, and enforcing best practices at scale. However, it also introduces new risks—such as insecure patterns copied from examples, dependency sprawl, prompt injection, and accidental data exposure.

The solution is governance, not fear. Secure coding standards, SBOMs (Software Bill of Materials), automated scanning, secrets management, and human sign-off on sensitive changes are non-negotiable. AI should operate inside a controlled engineering system, not outside it. Security improves when AI is treated as a tool, not an authority.

Q5. What is the one traditional development skill that you believe is still irreplaceable by AI?

Sound engineering judgment. Turning ambiguous business needs into systems that are correct, resilient, secure, and maintainable—and making trade-offs under real constraints—is fundamentally human. AI can suggest options and patterns, but it cannot own outcomes. Accountability for production failures, customer impact, and long-term sustainability still rests with people. Complex debugging in live systems also remains deeply dependent on context and reasoning. Judgment is not a skill AI replaces; it’s the skill AI amplifies.

Q6. How do you see the business of software changing—will we stop paying for seats and start paying for the actual work the AI completes?

We are already moving away from seat-based pricing toward outcome- and usage-based models. Customers increasingly want to pay for delivered value—transactions processed, workflows automated, compliance achieved—not just licenses.

That doesn’t eliminate platforms or governance costs, but it shifts expectations. Vendors will need to clearly demonstrate measurable impact while maintaining security, reliability, and regulatory compliance. The winners will be those who can connect AI activity directly to business KPIs, not just technical output.

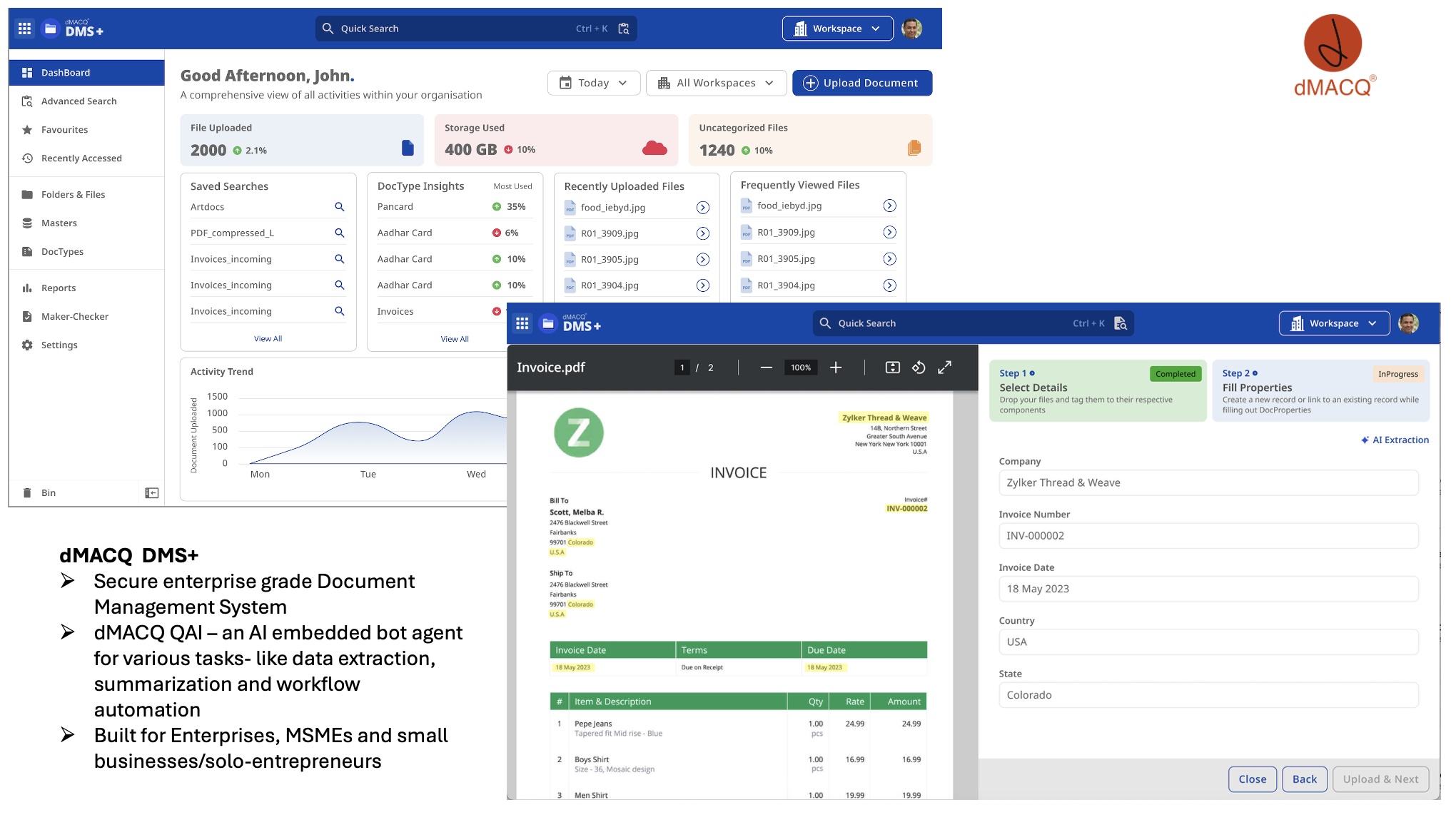

Q7. How is dMACQ adopting AI in its products, and where do you see the most tangible impact today?

At dMACQ, we focus on applied AI rather than AI for its own sake. Our adoption is centered around enterprise workflows—document management, compliance, vendor onboarding, board governance, and form-based business process automation—where accuracy, traceability, and auditability matter. Our product, the Document Management System (DMS), is core to any digital transformation journey and forms the base of every business’s automation dreams.

AI is embedded to automate data extraction, validation, classification, and decision support, while keeping humans in the loop for approvals and exceptions. The most tangible impact we see is reduced processing time, fewer manual errors, and faster compliance cycles for customers, especially in regulated environments.

Q8. From a business perspective, what are the biggest challenges you face while scaling AI responsibly?

The biggest challenge is balancing speed with trust. Enterprises want rapid automation, but they also need explainability, compliance, and predictable outcomes. That means investing heavily in governance, security, and change management alongside AI capabilities.

Another challenge is expectation management. AI is powerful, but it is not magic. Setting the right boundaries—what AI should automate, what requires human judgment, and how errors are handled—is critical for long-term adoption. From a business standpoint, success comes not from adopting AI fastest, but from adopting it safely, transparently, and in alignment with real operational needs.